Aden-Buie, Garrick. 2023.

epoxy: String Interpolation for Documents, Reports and Apps.

https://CRAN.R-project.org/package=epoxy.

Allaire, JJ, Yihui Xie, Christophe Dervieux, Jonathan McPherson, Javier Luraschi, Kevin Ushey, Aron Atkins, et al. 2025.

rmarkdown: Dynamic Documents for r.

https://github.com/rstudio/rmarkdown.

Arel-Bundock, Vincent. 2022.

“modelsummary: Data and Model Summaries in R.” Journal of Statistical Software 103 (1): 1–23.

https://doi.org/10.18637/jss.v103.i01.

Arel-Bundock, Vincent, Noah Greifer, and Andrew Heiss. 2024.

“How to Interpret Statistical Models Using marginaleffects for R and Python.” Journal of Statistical Software 111 (9): 1–32.

https://doi.org/10.18637/jss.v111.i09.

Barbone, Jordan Mark, and Jan Marvin Garbuszus. 2025.

Openxlsx2: Read, Write and Edit “xlsx” Files.

https://janmarvin.github.io/openxlsx2/.

Bates, Douglas, Martin Mächler, Ben Bolker, and Steve Walker. 2015.

“Fitting Linear Mixed-Effects Models Using lme4.” Journal of Statistical Software 67 (1): 1–48.

https://doi.org/10.18637/jss.v067.i01.

Benchimol, Eric I., Liam Smeeth, Astrid Guttmann, Katie Harron, David Moher, Irene Petersen, Henrik T. Sørensen, Erik von Elm, and Sinéad M. Langan. 2015.

“The REporting of Studies Conducted Using Observational Routinely-Collected Health Data (RECORD) Statement.” PLOS Medicine 12 (10): e1001885.

https://doi.org/10.1371/journal.pmed.1001885.

Benoit, Kenneth, David Muhr, and Kohei Watanabe. 2021.

stopwords: Multilingual Stopword Lists.

https://CRAN.R-project.org/package=stopwords.

Bolker, Ben, and David Robinson. 2024.

broom.mixed: Tidying Methods for Mixed Models.

https://CRAN.R-project.org/package=broom.mixed.

Bryan, Jennifer. 2025.

Googlesheets4: Access Google Sheets Using the Sheets API V4.

https://CRAN.R-project.org/package=googlesheets4.

Bryan, Jennifer, Craig Citro, and Hadley Wickham. 2025.

gargle: Utilities for Working with Google APIs.

https://CRAN.R-project.org/package=gargle.

Carroll, Orlagh U., Tim P. Morris, and Ruth H. Keogh. 2020.

“How Are Missing Data in Covariates Handled in Observational Time-to-Event Studies in Oncology? A Systematic Review.” BMC Medical Research Methodology 20 (1).

https://doi.org/10.1186/s12874-020-01018-7.

D’Agostino McGowan, Lucy, and Jennifer Bryan. 2025.

googledrive: An Interface to Google Drive.

https://CRAN.R-project.org/package=googledrive.

Davies, G. Matt, and Alan Gray. 2015.

“Don’t Let Spurious Accusations of Pseudoreplication Limit Our Ability to Learn from Natural Experiments (and Other Messy Kinds of Ecological Monitoring).” Ecology and Evolution 5 (22): 5295–5304.

https://doi.org/10.1002/ece3.1782.

Dayim, Alim. 2024.

consort: Create Consort Diagram.

https://CRAN.R-project.org/package=consort.

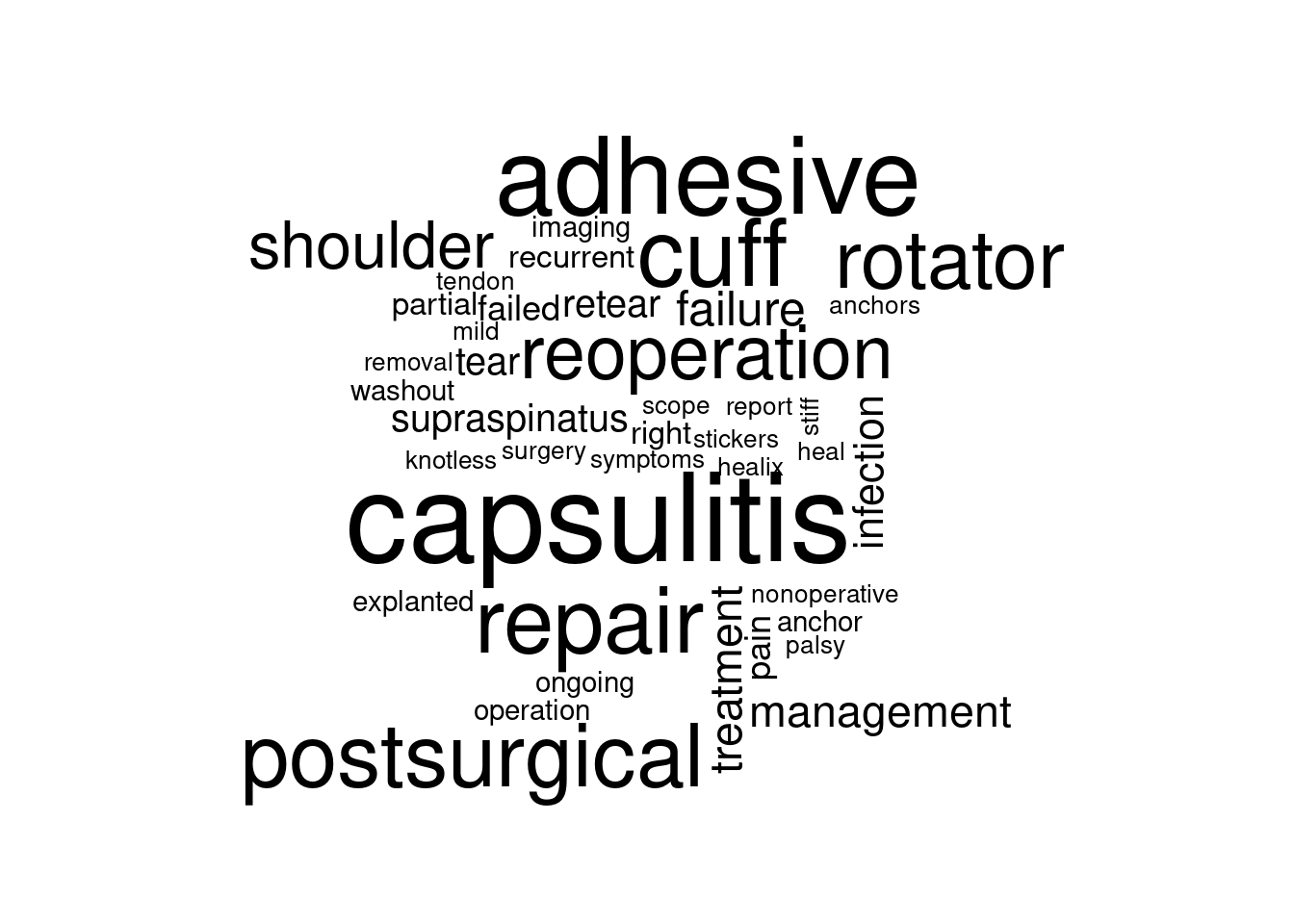

Fellows, Ian. 2018.

wordcloud: Word Clouds.

https://CRAN.R-project.org/package=wordcloud.

Felsch, Quinten, Victoria Mai, Holger Durchholz, Matthias Flury, Maximilian Lenz, Carl Capellen, and Laurent Audigé. 2021.

“Complications Within 6 Months After Arthroscopic Rotator Cuff Repair: Registry-Based Evaluation According to a Core Event Set and Severity Grading.” Arthroscopy: The Journal of Arthroscopic & Related Surgery 37 (1): 50–58.

https://doi.org/10.1016/j.arthro.2020.08.010.

Fuchs, Bruno, Dominik Weishaupt, Marco Zanetti, Juerg Hodler, and Christian Gerber. 1999.

“Fatty Degeneration of the Muscles of the Rotator Cuff: Assessment by Computed Tomography Versus Magnetic Resonance Imaging.” Journal of Shoulder and Elbow Surgery 8 (6): 599–605.

https://doi.org/10.1016/s1058-2746(99)90097-6.

Gohel, David, and Panagiotis Skintzos. 2025.

flextable: Functions for Tabular Reporting.

https://CRAN.R-project.org/package=flextable.

Grolemund, Garrett, and Hadley Wickham. 2011.

“Dates and Times Made Easy with lubridate.” Journal of Statistical Software 40 (3): 1–25.

https://www.jstatsoft.org/v40/i03/.

Horikoshi, Masaaki, and Yuan Tang. 2018.

ggfortify: Data Visualization Tools for Statistical Analysis Results.

https://CRAN.R-project.org/package=ggfortify.

Iannone, Richard, Joe Cheng, Barret Schloerke, Ellis Hughes, Alexandra Lauer, JooYoung Seo, Ken Brevoort, and Olivier Roy. 2025.

gt: Easily Create Presentation-Ready Display Tables.

https://CRAN.R-project.org/package=gt.

Kay, Matthew. 2024.

“ggdist: Visualizations of Distributions and Uncertainty in the Grammar of Graphics.” IEEE Transactions on Visualization and Computer Graphics 30 (1): 414–24.

https://doi.org/10.1109/TVCG.2023.3327195.

———. 2025.

ggdist: Visualizations of Distributions and Uncertainty.

https://doi.org/10.5281/zenodo.3879620.

Kirkley, Alexandra, Christine Alvarez, and Sharon Griffin. 2003.

“The Development and Evaluation of a Disease-Specific Quality-of-Life Questionnaire for Disorders of the Rotator Cuff: The Western Ontario Rotator Cuff Index.” Clinical Journal of Sport Medicine 13 (2): 84–92.

https://doi.org/10.1097/00042752-200303000-00004.

Koenker, Roger. 2025.

quantreg: Quantile Regression.

https://CRAN.R-project.org/package=quantreg.

Kuhn, Max, and Hadley Wickham. 2020.

Tidymodels: A Collection of Packages for Modeling and Machine Learning Using Tidyverse Principles. https://www.tidymodels.org.

Lädermann, Alexandre, Stephen S. Burkhart, Pierre Hoffmeyer, Lionel Neyton, Philippe Collin, Evan Yates, and Patrick J. Denard. 2016.

“Classification of Full-Thickness Rotator Cuff Lesions: A Review.” EFORT Open Reviews 1 (12): 420–30.

https://doi.org/10.1302/2058-5241.1.160005.

Larmarange, Joseph, and Daniel D. Sjoberg. 2025.

broom.helpers: Helpers for Model Coefficients Tibbles.

https://CRAN.R-project.org/package=broom.helpers.

Lazic, Stanley E. 2010.

“The Problem of Pseudoreplication in Neuroscientific Studies: Is It Affecting Your Analysis?” BMC Neuroscience 11 (1).

https://doi.org/10.1186/1471-2202-11-5.

Nguyen, Van Thu, Mishelle Engleton, Mauricia Davison, Philippe Ravaud, Raphael Porcher, and Isabelle Boutron. 2021.

“Risk of Bias in Observational Studies Using Routinely Collected Data of Comparative Effectiveness Research: A Meta-Research Study.” BMC Medicine 19 (1).

https://doi.org/10.1186/s12916-021-02151-w.

Pedersen, Thomas Lin. 2025.

patchwork: The Composer of Plots.

https://CRAN.R-project.org/package=patchwork.

R Core Team. 2024a.

R: A Language and Environment for Statistical Computing. Vienna, Austria: R Foundation for Statistical Computing.

https://www.R-project.org/.

———. 2024b.

R: A Language and Environment for Statistical Computing. Vienna, Austria: R Foundation for Statistical Computing.

https://www.R-project.org/.

Rashid, Mustafa S, Cushla Cooper, Jonathan Cook, David Cooper, Stephanie G Dakin, Sarah Snelling, and Andrew J Carr. 2017.

“Increasing Age and Tear Size Reduce Rotator Cuff Repair Healing Rate at 1 Year.” Acta Orthopaedica 88 (6): 606–11.

https://doi.org/10.1080/17453674.2017.1370844.

Rinker, Tyler W., and Dason Kurkiewicz. 2018.

pacman: Package Management for R. Buffalo, New York.

http://github.com/trinker/pacman.

Robinson, David, Alex Hayes, and Simon Couch. 2025.

broom: Convert Statistical Objects into Tidy Tibbles.

https://CRAN.R-project.org/package=broom.

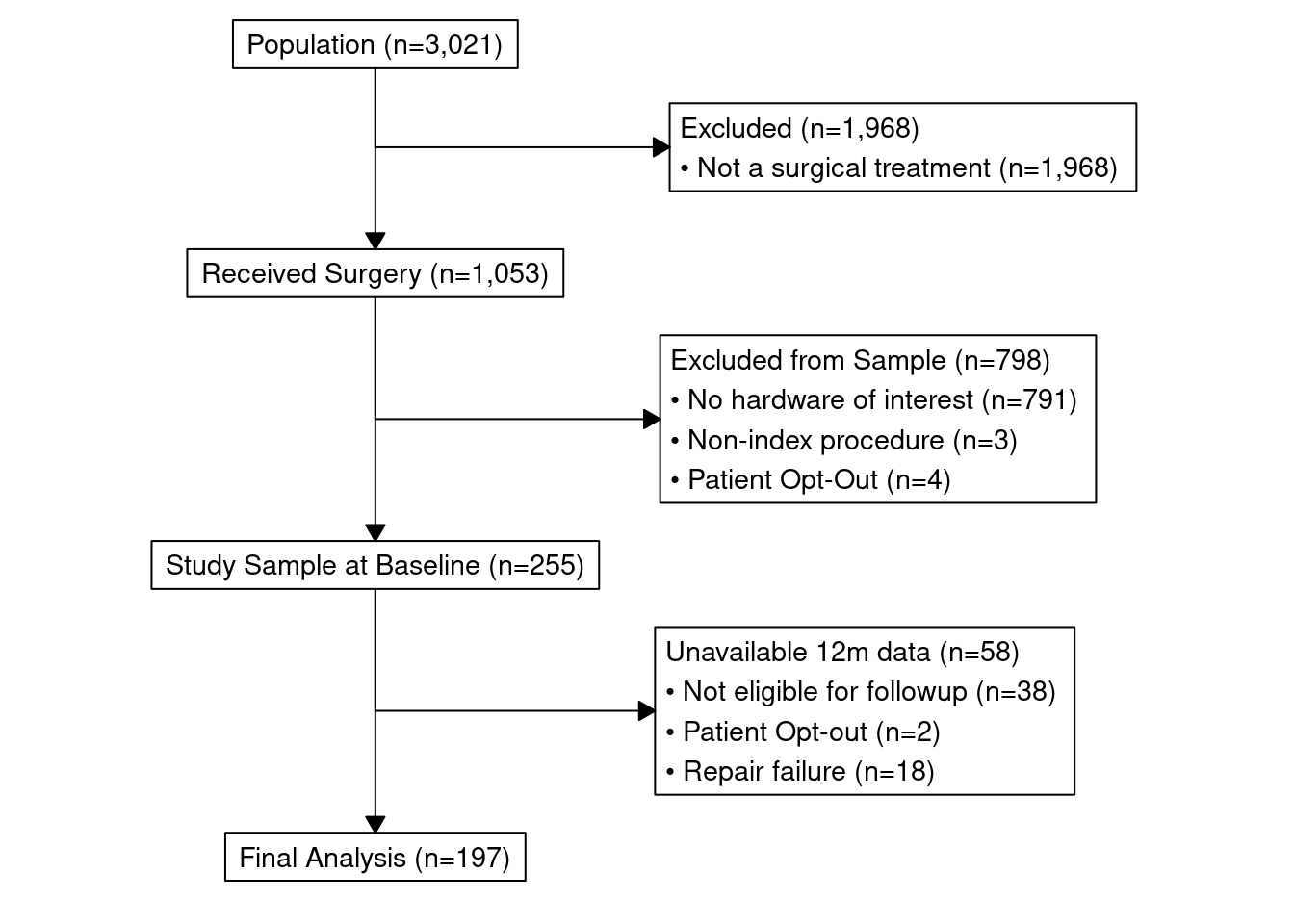

Scholes, Corey, Kevin Eng, Meredith Harrison-Brown, Milad Ebrahimi, Graeme Brown, Stephen Gill, and Richard Page. 2023.

“Patient Registry of Upper Limb Outcomes (PRULO) a Protocol for an Orthopaedic Clinical Quality Registry to Monitor Treatment Outcomes.” http://dx.doi.org/10.1101/2023.02.01.23284494.

Silge, Julia, and David Robinson. 2016.

“tidytext: Text Mining and Analysis Using Tidy Data Principles in r.” JOSS 1 (3).

https://doi.org/10.21105/joss.00037.

Sjoberg, Daniel D., Mark Baillie, Charlotta Fruechtenicht, Steven Haesendonckx, and Tim Treis. 2025.

ggsurvfit: Flexible Time-to-Event Figures.

https://CRAN.R-project.org/package=ggsurvfit.

Sjoberg, Daniel D., and Teng Fei. 2024.

tidycmprsk: Competing Risks Estimation.

https://CRAN.R-project.org/package=tidycmprsk.

Sjoberg, Daniel D., Karissa Whiting, Michael Curry, Jessica A. Lavery, and Joseph Larmarange. 2021.

“Reproducible Summary Tables with the Gtsummary Package.” The R Journal 13: 570–80.

https://doi.org/10.32614/RJ-2021-053.

Sjoberg, Daniel D., Abinaya Yogasekaram, and Emily de la Rua. 2025.

cardx: Extra Analysis Results Data Utilities.

https://CRAN.R-project.org/package=cardx.

Tang, Yuan, Masaaki Horikoshi, and Wenxuan Li. 2016.

“ggfortify: Unified Interface to Visualize Statistical Result of Popular r Packages.” The R Journal 8 (2): 474–85.

https://doi.org/10.32614/RJ-2016-060.

Tennant, Peter W G, Eleanor J Murray, Kellyn F Arnold, Laurie Berrie, Matthew P Fox, Sarah C Gadd, Wendy J Harrison, et al. 2020.

“Use of Directed Acyclic Graphs (DAGs) to Identify Confounders in Applied Health Research: Review and Recommendations.” International Journal of Epidemiology 50 (2): 620–32.

https://doi.org/10.1093/ije/dyaa213.

Terry M. Therneau, and Patricia M. Grambsch. 2000. Modeling Survival Data: Extending the Cox Model. New York: Springer.

Therneau, Terry M. 2024.

A Package for Survival Analysis in r.

https://CRAN.R-project.org/package=survival.

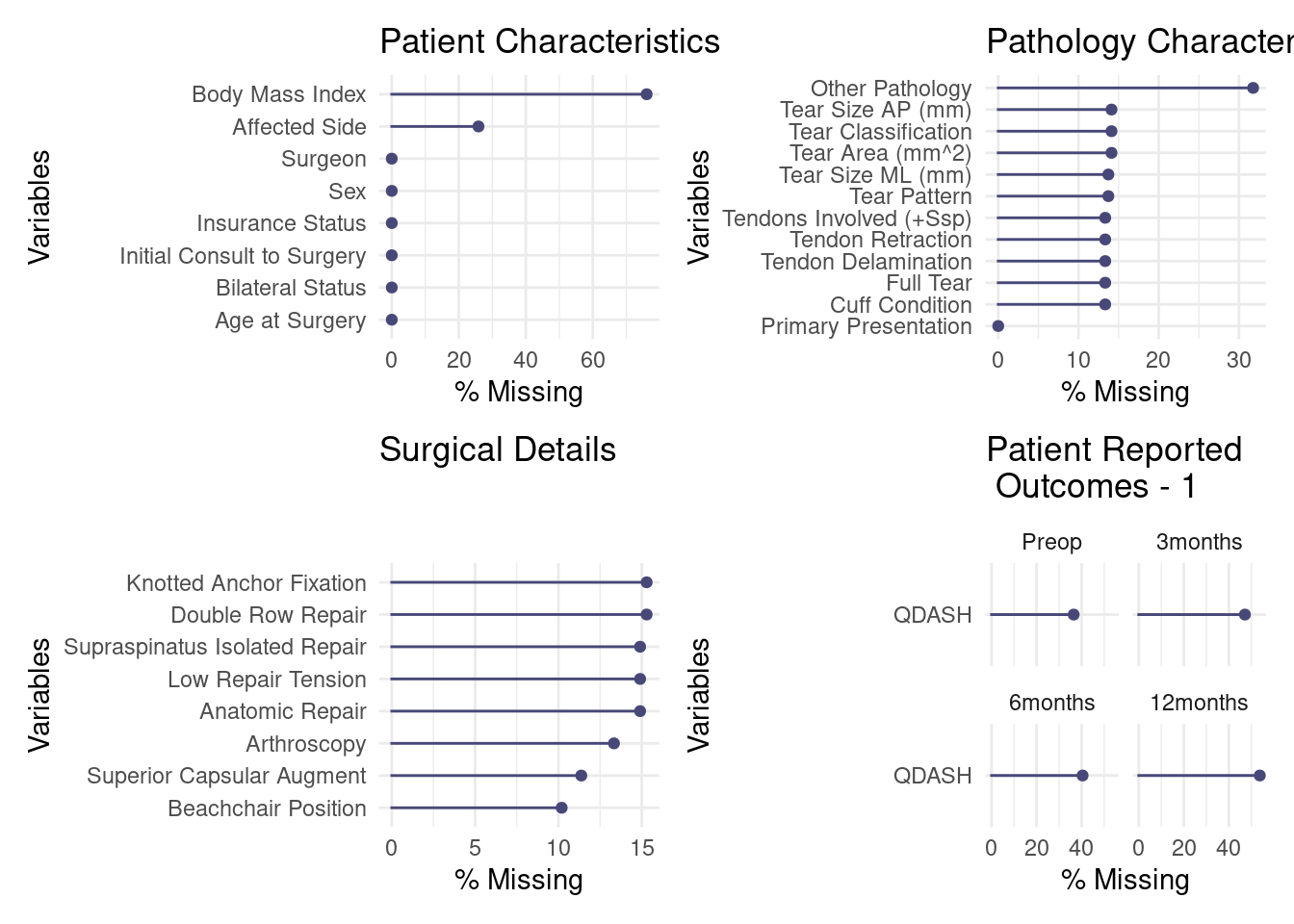

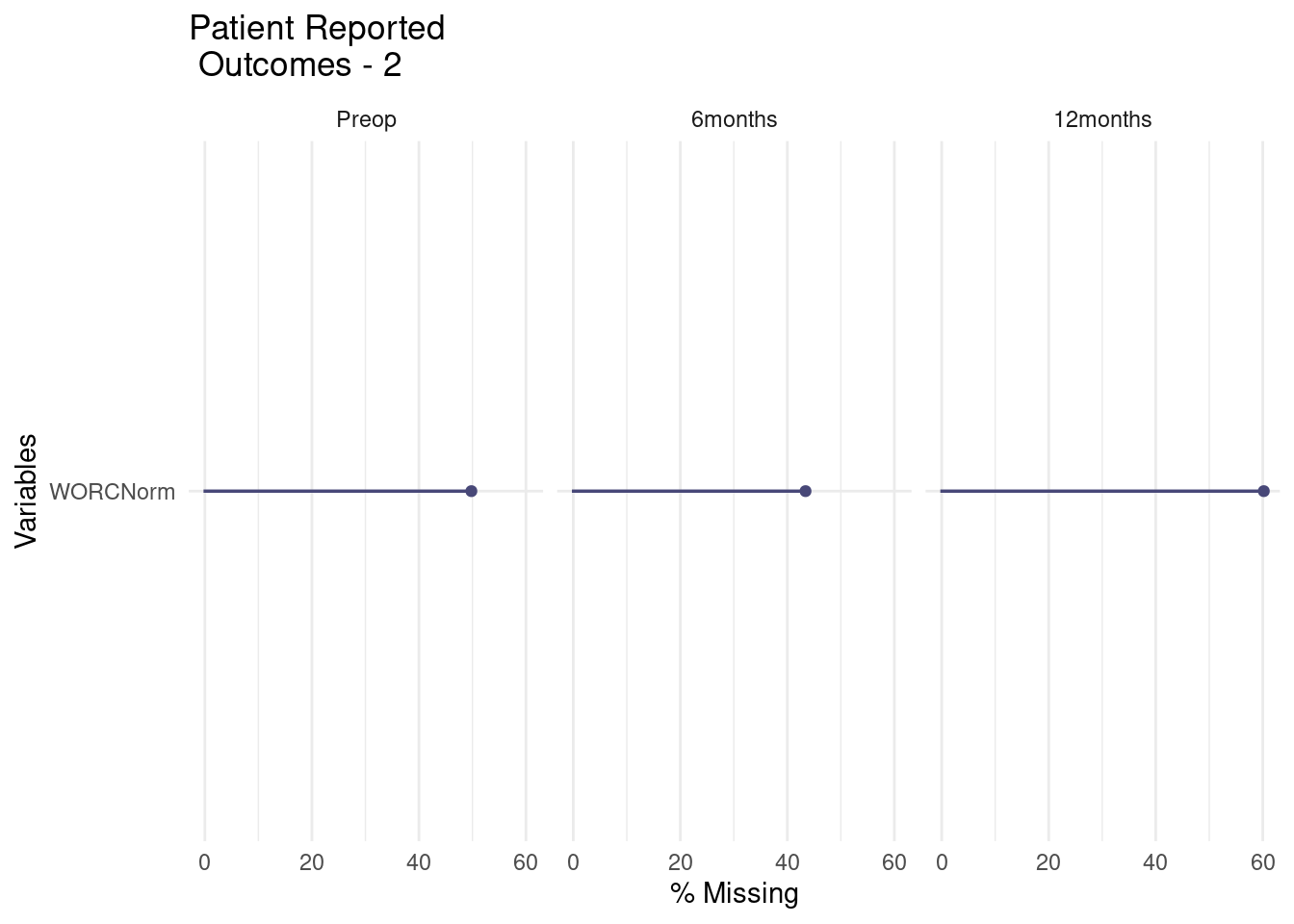

Tierney, Nicholas, and Dianne Cook. 2023.

“Expanding Tidy Data Principles to Facilitate Missing Data Exploration, Visualization and Assessment of Imputations.” Journal of Statistical Software 105 (7): 1–31.

https://doi.org/10.18637/jss.v105.i07.

van Buuren, Stef, and Karin Groothuis-Oudshoorn. 2011.

“mice: Multivariate Imputation by Chained Equations in r.” Journal of Statistical Software 45 (3): 1–67.

https://doi.org/10.18637/jss.v045.i03.

White, Ian R., Patrick Royston, and Angela M. Wood. 2010.

“Multiple Imputation Using Chained Equations: Issues and Guidance for Practice.” Statistics in Medicine 30 (4): 377–99.

https://doi.org/10.1002/sim.4067.

Wickham, Hadley. 2016.

Ggplot2: Elegant Graphics for Data Analysis. Springer-Verlag New York.

https://ggplot2.tidyverse.org.

———. 2025a.

forcats: Tools for Working with Categorical Variables (Factors).

https://CRAN.R-project.org/package=forcats.

———. 2025b.

stringr: Simple, Consistent Wrappers for Common String Operations.

https://CRAN.R-project.org/package=stringr.

Wickham, Hadley, Mara Averick, Jennifer Bryan, Winston Chang, Lucy D’Agostino McGowan, Romain François, Garrett Grolemund, et al. 2019.

“Welcome to the tidyverse.” Journal of Open Source Software 4 (43): 1686.

https://doi.org/10.21105/joss.01686.

Wickham, Hadley, Romain François, Lionel Henry, Kirill Müller, and Davis Vaughan. 2023.

dplyr: A Grammar of Data Manipulation.

https://CRAN.R-project.org/package=dplyr.

Wickham, Hadley, Jim Hester, and Jennifer Bryan. 2024.

readr: Read Rectangular Text Data.

https://CRAN.R-project.org/package=readr.

Wickham, Hadley, Thomas Lin Pedersen, and Dana Seidel. 2025.

scales: Scale Functions for Visualization.

https://CRAN.R-project.org/package=scales.

Wickham, Hadley, Davis Vaughan, and Maximilian Girlich. 2024.

tidyr: Tidy Messy Data.

https://CRAN.R-project.org/package=tidyr.

Xie, Yihui. 2014. “knitr: A Comprehensive Tool for Reproducible Research in R.” In Implementing Reproducible Computational Research, edited by Victoria Stodden, Friedrich Leisch, and Roger D. Peng. Chapman; Hall/CRC.

———. 2015.

Dynamic Documents with R and Knitr. 2nd ed. Boca Raton, Florida: Chapman; Hall/CRC.

https://yihui.org/knitr/.

———. 2025a.

knitr: A General-Purpose Package for Dynamic Report Generation in R.

https://yihui.org/knitr/.

———. 2025b.

litedown: A Lightweight Version of r Markdown.

https://CRAN.R-project.org/package=litedown.

Xie, Yihui, J. J. Allaire, and Garrett Grolemund. 2018.

R Markdown: The Definitive Guide. Boca Raton, Florida: Chapman; Hall/CRC.

https://bookdown.org/yihui/rmarkdown.

Xie, Yihui, Christophe Dervieux, and Emily Riederer. 2020.

R Markdown Cookbook. Boca Raton, Florida: Chapman; Hall/CRC.

https://bookdown.org/yihui/rmarkdown-cookbook.